Sovereign Multi-Tenant GenAI Platform for Scalable E-Learning Content Generation

Key Challenges

Collow needed to build a scalable multi-tenant GenAI platform while ensuring strict data isolation and security across shared infrastructure. Integrating diverse knowledge sources like S3, Google Drive, SharePoint, and Confluence added complexity to ingestion and synchronization. Additionally, meeting enterprise requirements around data residency, governance, and auditability, while maintaining high performance for real-time content generation posed a significant architectural challenge.

Key Results

Collow successfully launched a sovereign-ready, multi-tenant RAG-based platform that enables secure, scalable e-learning content generation. The solution reduced manual content creation effort by 60–70%, improved content retrieval efficiency by up to 5x, and supports 10K+ queries per day with low latency. With strong data isolation, EU-based data residency, and seamless integrations, the platform is now ready for enterprise and regulated market deployment.

Overview

Collow.ai is an AI-powered e‑learning company that helps organizations turn their existing knowledge (slides, PDFs, documentation) into scalable, interactive digital training and academies, reducing the effort of manual course creation by automating content generation, translation and personalization.

To improve their Digital Learning products and provide customers with simplified processes, Collow was looking to integrate more automation and GenAI capabilities into their learning setup, with the main objective of providing a multi-tenant SaaS platform that lets organizations generate highly customized e‑learning courses using Retrieval-Augmented Generation (RAG), while keeping each client’s knowledge base and vector store isolated on shared infrastructure.

The result is the CollowCreator RAG, ideal for organizations that want to securely turn internal knowledge into personalized learning content, capable of:

- Generating training content directly from internal corporate knowledge

- Maintaining full control over what data the AI can access

- Integrating seamlessly with SharePoint, Google Workspace, and Confluence

- Scaling efficiently to handle large knowledge bases and frequent content updates

Challenges

Collow needed to balance scalability with strict data control, especially in a shared multi-tenant environment. The core challenge was enabling multiple organizations to operate on the same infrastructure without compromising data isolation or security boundaries.

The platform had to support diverse knowledge sources such as S3, Google Drive, SharePoint, and Confluence in a consistent and scalable manner, introducing complexity in ingestion and synchronization. Data residency and preventing data from leaving controlled boundaries during ingestion and LLM processing were critical, particularly given enterprise and regulatory expectations.

From a governance perspective, maintaining end-to-end auditability and traceability across distributed components added another layer of complexity, all while keeping the solution operationally efficient and aligned with digital sovereignty and compliance expectations.

On top of that, the system had to handle high-volume ingestion and processing workloads while still delivering near real-time query responses, to give end users a smooth experience with educational content generation.

Solution

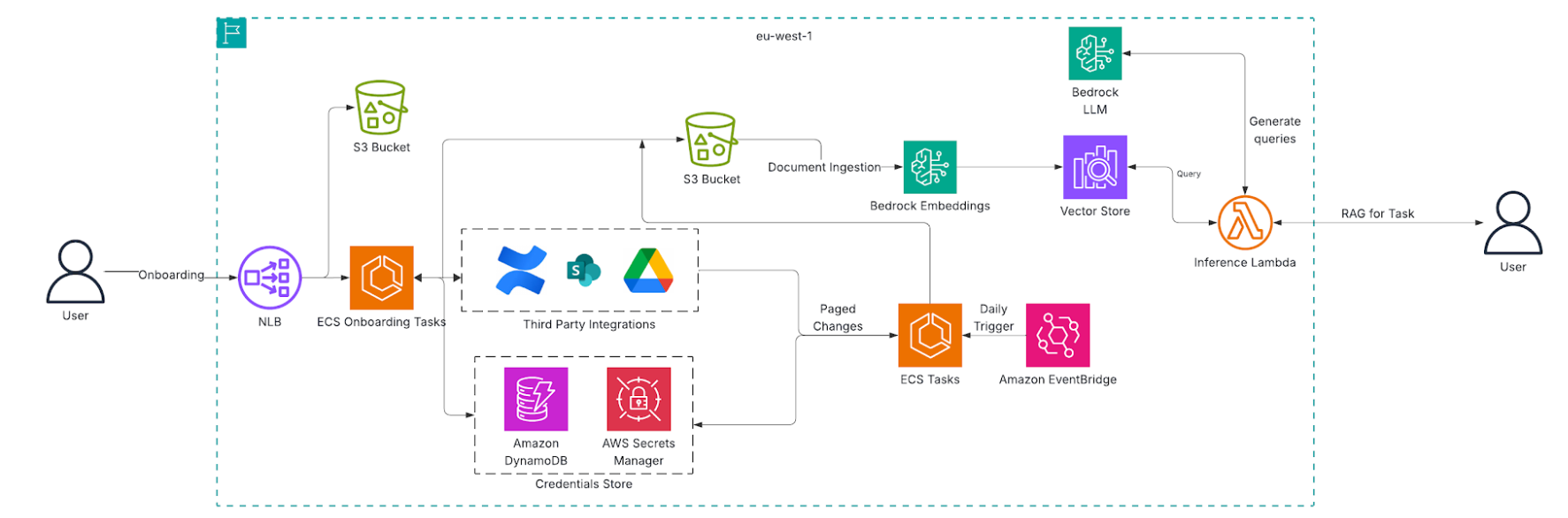

The platform was designed as a modular, event-driven RAG architecture built on AWS managed services, enabling scalable and secure content generation while maintaining strict tenant isolation.

At its core, Amazon S3 serves as the primary knowledge base, storing tenant-specific documents, while OpenSearch Serverless handles vector storage with logical separation through tenant-specific indexes. Amazon Bedrock powers both embedding generation and LLM-based content creation, and AWS Lambda orchestrates ingestion, deletion, and inference workflows. For workloads that exceed Lambda limits, such as large-scale onboarding and external integrations, Amazon ECS is used, with EventBridge managing scheduled synchronization.

The ingestion pipeline supports both real-time and scheduled workflows. Documents uploaded to S3 are automatically processed into embeddings and indexed, while external sources like Google Drive, SharePoint, and Confluence are integrated through ECS-based onboarding and periodic sync mechanisms. This ensures that all knowledge sources are consistently updated without impacting the core pipeline.

When a user submits a query, the system dynamically generates contextual search queries using Bedrock, retrieves the most relevant content from OpenSearch, and then uses LLM capabilities to generate structured e-learning outputs such as course modules, lessons, and summaries.

The architecture is designed to support scale, handling approximately 10–50 tenants on shared infrastructure, with each tenant managing up to 50K–200K documents. The system is capable of processing 10K+ queries per day with average response times between 2–5 seconds, enabled by serverless auto-scaling and optimized retrieval mechanisms.

The platform allows multiple clients to operate on a shared AWS infrastructure, maintaining strict isolation of their data, knowledge bases, and access boundaries. It integrates Amazon S3, OpenSearch Serverless, AWS Lambda, Amazon Bedrock, and ECS to deliver scalable ingestion, intelligent retrieval, and automated content generation.

The solution is deployed within a single AWS region (EU-West-1 – Ireland) to meet data residency expectations, ensuring that all customer data, processing, and AI interactions remain within the defined geographic boundary.

Given the enterprise and multi-tenant nature of the platform, the architecture is designed with strong digital sovereignty principles - ensuring data control, auditability, and compliance by default.

Architecture Diagram

Business Outcome

With the new RAG-based CollowCreator platform, Collow can provide its customers with an advanced system to turn knowledge into new digital learning solutions and deliver personalized learning experiences efficiently. More specifically, the platform has business impacts in many areas:

Operational Impact

- Reduced manual effort in content preparation by 60–70% through automated ingestion and structuring

- Near real-time content availability for S3-based ingestion

- Support onboarding of new tenants without infrastructure changes

- Improved content retrieval efficiency by 3–5x compared to manual search workflows

Security & Sovereignty Impact

- Strict tenant-level data isolation across shared infrastructure

- Data residency within EU region, aligning with regulatory expectations

- Full auditability through centralized logging and traceable workflows

- Standardized architecture that simplifies compliance validation and governance

Strategic Impact

- Collow can now offer a sovereign-ready GenAI SaaS platform

- The solution is ready for delivery into regulated markets (EU, enterprise, public sector)

- Supported integration with multiple enterprise content systems without redesign

- A scalable foundation for further developments like multi-region deployments

Disaster Recovery & Survivability

The current architecture is designed for high availability using managed and serverless services:

- Core services (S3, OpenSearch Serverless, Lambda, Bedrock) provide built-in durability and fault tolerance

- Event-driven pipelines ensure automatic recovery from transient failures

- ECS-based ingestion tasks can be retried or re-triggered without data loss

Planned Enhancements

- Multi-region replication for S3 and OpenSearch to support cross-region failover

- Backup and restore strategies for tenant indexes and metadata

- Defined RTO/RPO targets aligned to business requirements

- Automated recovery workflows using infrastructure-as-code